Governor Gavin Newsom was busy last week. He signed not one, not two, not three … but eight deepfake bills into law in California. Tech Crunch has an excellent summary of the new laws. Plus, there are 30 more AI bills for him to sign or veto, including the highly controversial safety bill SB 1047. Stay tuned on that one.

Are the AI Laws All Constitutional?

The 8 AI bills signed into law last week make for an excellent–and challenging–constitutional law exam.

The legislative impulse to get ahead of AI deepfakes and unauthorized digital replicas of people’s voice and likeness is understandable. AI deepfakes create potential problems for a variety contexts that may lead to deception, depictions of real people in sexually explicit content without their permission, unauthorized use of a performer’s likeness or voice, and fake content that could affect voters’ views of political candidates.

Yet, it’s also important to ask whether any of the AI laws might raise at least constitutional questions. We think that some definitely do, although, for others, probably not.

Here’s our quick, very tentative and preliminary reaction to the new laws–and the constitutional questions they may raise.

To be crystal clear: the analysis below does not contend that they laws are unconstitutional. Instead, it flags the laws that are likely to raise constitutional concerns, if not challenges.

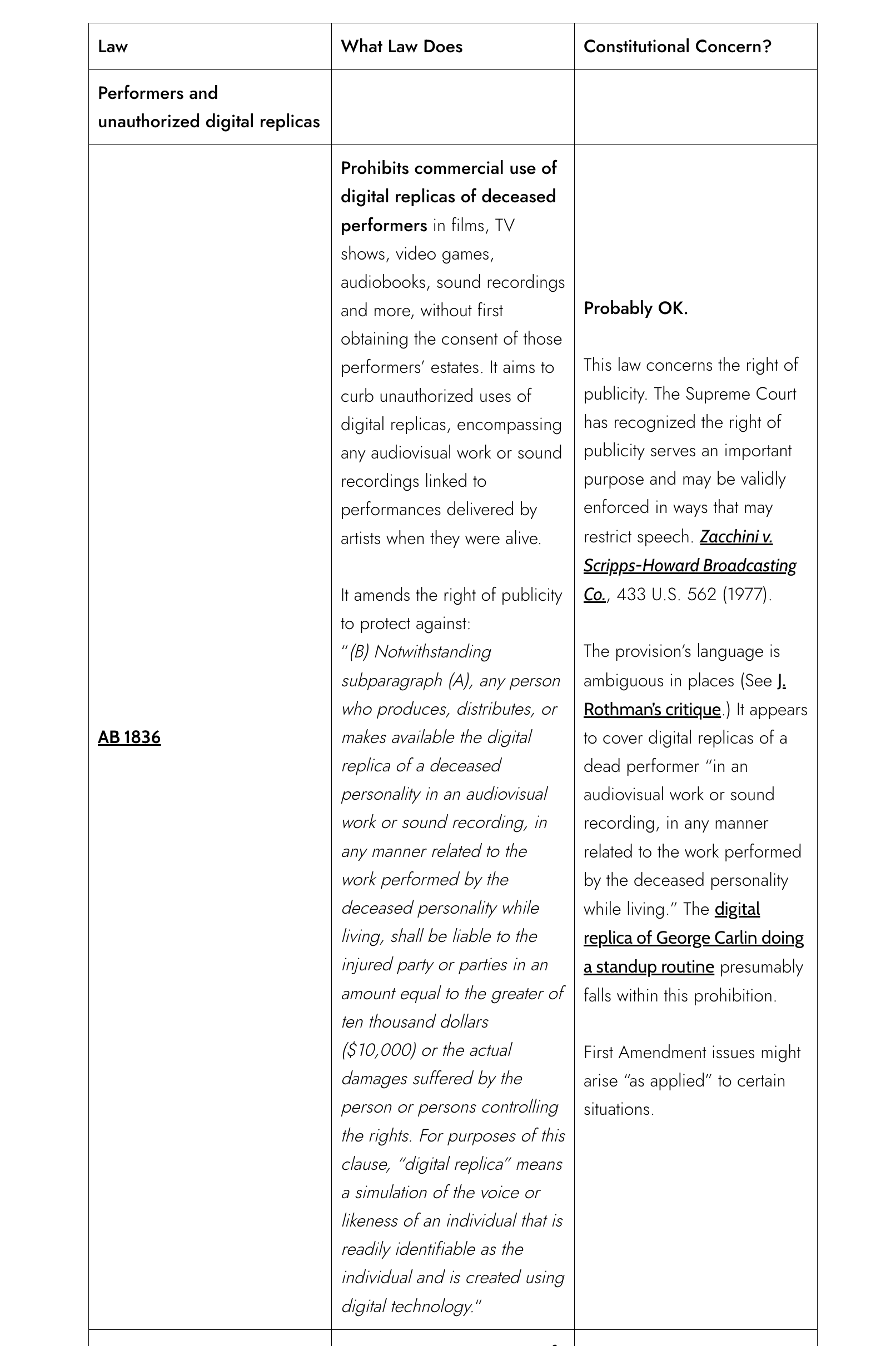

| Law | What Law Does | Constitutional Concern? |

| Performers and unauthorized digital replicas | ||

| AB 1836 | Prohibits commercial use of digital replicas of deceased performers in films, TV shows, video games, audiobooks, sound recordings and more, without first obtaining the consent of those performers’ estates. It aims to curb unauthorized uses of digital replicas, encompassing any audiovisual work or sound recordings linked to performances delivered by artists when they were alive. It amends the right of publicity to protect against: “(B) Notwithstanding subparagraph (A), any person who produces, distributes, or makes available the digital replica of a deceased personality in an audiovisual work or sound recording, in any manner related to the work performed by the deceased personality while living, shall be liable to the injured party or parties in an amount equal to the greater of ten thousand dollars ($10,000) or the actual damages suffered by the person or persons controlling the rights. For purposes of this clause, “digital replica” means a simulation of the voice or likeness of an individual that is readily identifiable as the individual and is created using digital technology.“ | Probably OK. This law concerns the right of publicity. The Supreme Court has recognized the right of publicity serves an important purpose and may be validly enforced in ways that may restrict speech. Zacchini v. Scripps-Howard Broadcasting Co., 433 U.S. 562 (1977). The provision’s language is ambiguous in places (See J. Rothman’s critique.) It appears to cover digital replicas of a dead performer “in an audiovisual work or sound recording, in any manner related to the work performed by the deceased personality while living.” The digital replica of George Carlin doing a standup routine presumably falls within this prohibition. First Amendment issues might arise “as applied” to certain situations. |

| AB 2602 | Requires contracts to specify the use of AI-generated digital replicas of a performer’s voice or likeness, and the performer must be professionally represented in negotiating the contract. This bill would provide that a provision in an agreement between an individual and any other person for the performance of personal or professional services is unenforceable only as it relates to a new performance, fixed on or after January 1, 2025, by a digital replica of the individual if the provision meets specified conditions relating to the use of a digital replica of the voice or likeness of an individual in lieu of the work of the individual. Section 927(a): “A provision in an agreement between an individual and any other person for the performance of personal or professional services is unenforceable only as it relates to a new performance, fixed on or after January 1, 2025, by a digital replica of the individual if the provision meets all of the following conditions: (1) The provision allows for the creation and use of a digital replica of the individual’s voice or likeness in place of work the individual would otherwise have performed in person. (2) (A) Except as provided in subparagraph (B), the provision does not include a reasonably specific description of the intended uses of the digital replica.(B) Failure to include a reasonably specific description of the intended uses of a digital replica does not render the provision unenforceable if the uses are consistent with the terms of the contract for the performance of personal or professional services and the fundamental character of the photography or soundtrack as recorded or performed.(3) The individual was not represented in any of the following manners: (A) By legal counsel who negotiated on behalf of the individual licensing the individual’s digital replica rights, and the commercial terms are stated clearly and conspicuously in a contract or other writing signed or initialed by the individual.(B) By a labor union representing workers who do the proposed work, and the terms of their collective bargaining agreement expressly addresses uses of digital replicas.” | Contracts Clause Raises a question whether it impairs the obligation of existing contracts under the U.S. Constitution and California Constitution. For a general explanation of past precedents involving the Contracts Clause, see Gary S. Ganchrow, Understanding the contract clause of the US Constitution, Daily J. (Jun. 15, 2020). Some impairments are allowed, but others go too far. |

| SB 942 | This bill, the California AI Transparency Act, would require a covered provider, as defined, to make available an artificial intelligence (AI) detection tool at no cost to the user that meets certain criteria, including that the AI detection tool is publicly accessible. The bill would require a covered provider to offer the user an option to include a manifest disclosure in image, video, or audio content, or content that is any combination thereof, created or altered by the covered provider’s generative artificial intelligence (GenAI) system that, among other things, identifies content as AI-generated content and is clear, conspicuous, appropriate for the medium of the content, and understandable to a reasonable person. The bill would require a covered provider to include a latent disclosure in AI-generated image, video, audio content, or content that is any combination thereof, created by the covered provider’s GenAI system that, among other things, to the extent that it is technically feasible and reasonable conveys certain information, either directly or through a link to a permanent internet website, regarding the provenance of the content. The bill would require a covered provider that knows a third-party licensee modified a licensed GenAI system such that it is no longer capable of including the disclosures described above in content the system creates or alters to revoke the license within 96 hours of discovering the licensee’s action and would require a third-party licensee to cease using a licensed GenAI system after the license for the system has been revoked by the covered provider. This bill would make a covered provider that violates these provisions liable for a civil penalty in the amount of $5,000 per violation to be collected in a civil action filed by the Attorney General, a city attorney, or a county counsel, as prescribed. The bill would, for a violation by a third-party licensee of the requirement to cease using a licensed GenAI system after the license of the system has been revoked, authorize the Attorney General, a county counsel, or a city attorney to bring a civil action for injunctive relief and reasonable attorney’s fees and costs. This bill would make its provisions operative on January 1, 2026. | First Amendment (1) Covered providers must offer AI detection tool to users and (2) must give users option of including AI-generated label or disclosure for their content, and (3) must include latent AI disclosure for AI-generated content produced by the covered provider’s own GenAI system. Technology mandates (1, 2) might raise a question under the First Amendment, although the law does not require any filtering. The Supreme Court upheld the Children’s Internet Protection Act, which required libraries receiving federal funds to install Internet filtering to block obscene and pornographic images and to prevent minors from accessing material harmful to them. See United States v. American Library Assn., Inc., 539 U.S. 194 (2003). The California law, however, is not an exercise of the spending power, but it also doesn’t require any filtering of content (as opposed to the tools that enable the labeling of AI-generated content). Mandatory labeling (3) raises a question of compelled speech. Courts scrutinize labeling requirements (esp. to correct deception) under a more lenient standard than strict scrutiny. See Zauderer v. Office of Disciplinary Counsel, 471 U.S. 626, 651 (1985); American Meat Institute v. U.S. Dept. of Agriculture, 760 F.3d 18 (D.C. Cir. 2014). |

| Election Laws | ||

| AB 2655 | Defending Democracy from Deepfake Deception Act of 2024 requires large online platforms to remove or label deceptive and digitally altered or created content related to elections during specified periods, and requires them to provide mechanisms to report such content. It also authorizes candidates, elected officials, elections officials, the Attorney General, and a district attorney or city attorney to seek injunctive relief against a large online platform for noncompliance with the act. | First Amendment Raises a question under the First Amendment of content based discrimination. The Supreme Court has recognized that false speech is protected speech under the First Amendment. However, the level of scrutiny the Court applies to a particular law is open to debate. In the plurality decision in United States v. Alvarez, 567 U.S. 709 (2012), Justice Kennedy (and 3 Justices) would apply strict scrutiny to the Stolen Valor Act b/c it didn’t fall within traditional exceptions for fraud, defamation, perjury, and the like. (2 Justices Breyer and Kagan would apply intermediate scrutiny; and 3 Justices Alito, Scalia, Thomas would treat lies about receiving a military medal as unprotected speech). The Supreme Court also recently recognized that Internet platforms have a First Amendment right to decide the content on its platform. See Moody v. Netchoice LLC. Does an election change the First Amendment analysis? Probably not (outside of candidates or their surrogates using deceptive deepfakes against each other). Lawsuit challenging this law already filed. See Kohls v. Bonta. For general discussion, see Richard Painter, Deepfake 2024: Will Citizens United and Artificial Intelligence Together Destroy Representative Democracy?, 14 J. National Security Law & Policy (2023). |

| AB 2839 | Elections: deceptive media in advertisements), an urgency measure expands the timeframe in which a committee or other entity is prohibited from knowingly distributing an advertisement or other election material containing deceptive AI-generated or manipulated content. The bill also expands the scope of existing law to prohibit materially deceptive content of elected officials, candidates, elections officials and others, authorizing them to file a civil action to enjoin the distribution of such material. | First Amendment Raises a question under the First Amendment of content based discrimination. However, the level of scrutiny the Court applies to a particular law is open to debate. In the plurality decision in United States v. Alvarez, 567 U.S. 709 (2012), Justice Kennedy (and 3 Justices) would apply strict scrutiny to the Stolen Valor Act b/c it didn’t fall within traditional exceptions for fraud, defamation, perjury, and the link. (2 Justices Breyer and Kagan would apply intermediate scrutiny; 3 Justices Alito, Scalia, Thomas would treat lies about receiving a military medal as unprotected speech). Lawsuit challenging this law already filed. See Kohls v. Bonta; see also above. |

| AB 2355 | Amendment to Political Reform Act of 1974: political advertisements: artificial intelligence. Law requires that electoral advertisements using AI-generated or substantially altered content feature a disclosure that the material has been altered. The bill authorizes the Fair Political Practices Commission to enforce a violation of these disclosure requirements by seeking injunctive relief to compel compliance or pursuing other remedies available to the commission under the Political Reform Act. | First Amendment Mandatory labeling raises a question of compelled speech. Courts scrutinize labeling requirements (esp. to correct deception) under a more lenient standard than strict scrutiny. See Zauderer v. Office of Disciplinary Counsel, 471 U.S. 626, 651 (1985); American Meat Institute v. U.S. Dept. of Agriculture, 760 F.3d 18 (D.C. Cir. 2014). Zauderer involved an (attorney) advertiser’s right. The Court upheld the disclosure requirement if it was “reasonably related to the State’s interest in preventing deception of consumers.” |

| Deepfake Nudes | ||

| SB 926 | Crimes: distribution of intimate images Make it a crime for a person who is 18 years of age or older to intentionally create and distribute or cause to be distributed any photo realistic image, digital image, electronic image, computer image, computer-generated image, or other pictorial representation of an intimate body part or parts of another identifiable person, or an image of the person depicted engaged in an act of sexual intercourse, sodomy, oral copulation, sexual penetration, or an image of masturbation by the person depicted or in which the person depicted participates that was created in a manner that would cause a reasonable person to believe the image is an authentic image of the person depicted, under circumstances in which the person distributing the image knows or should know that distribution of the image will cause serious emotional distress, and the person depicted suffers that distress. By expanding the scope of a crime, this bill would impose a state-mandated local program. | First Amendment Raises a question of a content based restriction under the First Amendment. As noted above, false speech is protected speech, the government restriction of which receives strict scrutiny outside of some traditional categories. Alvarez. Ashcroft v. Free Speech Coalition, 535 U.S. 234 (2002) held that even simulated virtual child pornography that did not involve actual children in the depictions is entitled to full First Amendment protection. The California legislature tried to narrowly tailor the scope of this law to a limited scenario. SB 926 requires depictions of “another identifiable person”; “a reasonable person to believe the image is an authentic image of the person depicted”; the defendant to have the scienter of “knows or should know that distribution of the image will cause serious emotional distress”; and “the person depicted suffers that emotional distress.” The misdemeanor resembles a negligent infliction of emotional distress claim in tort, as well as having shades of misrepresentation or defamation (“a reasonable person to believe the image is an authentic“) and invasion of privacy. That doesn’t necessarily avoid the First Amendment question, but it helps that the statute resembles traditional torts, given the plurality decision in Alvarez. Even though the law is statutory, the state can argue that its prohibition is analogous to these well-recognized traditional torts. |

| SB 981 | Sexually explicit digital images. Existing law generally regulates social media platforms, including by requiring a social media platform to provide, in a mechanism that is reasonably accessible to users, a means for a user who is a California resident to report material to the social media platform that the user reasonably believes is, among other things, child sexual abuse material. This bill would require a social media platform to provide a mechanism that is reasonably accessible to a reporting user who is a California resident who has an account with the social media platform to report sexually explicit digital identity theft to the social media platform. The bill would define “sexually explicit digital identity theft” to mean the posting of covered material on a social media platform and would define “covered material” to mean material that meets certain criteria, including that the material is an image or video created or altered through digitization that would appear to a reasonable person to be an image or video of an intimate body part of an identifiable person or an identifiable person engaged in certain sexual acts, and that the reporting person is the person depicted in the material and did not consent to the use of the reporting person’s likeness in the material. The bill would also require a social media platform to immediately remove a reported instance of sexually explicit digital identity theft from being publicly viewable on the social media platform if the social media platform determines there is a reasonable basis to believe the reported sexually explicit digital identity theft is sexually explicit digital identity theft, as prescribed. | First Amendment Might raise a question under the First Amendment. Mandating that (1) social media platforms provide a reporting mechanism and (2) removal of sexually explicit digital identity theft. The category of “sexually explicit digital identity theft” might help the argument that this law is narrowly tailored to achieve a compelling state interest. Alternatively, “identity theft” might be considered a category that the Supreme Court would view as a general law regulating theft that is not subject to strict scrutiny. For discussion of identity theft laws and the First Amendment, see Philip F. DiSanto, Blurred Lines of Identity Crimes: Intersetion of the First Amendment and Federal Identity Fraud, 115 Columbia L. Rev. 941 (2015). |